8 minutes reading time

Artificial intelligence (AI) is rapidly emerging as a defining force in the global economy. It is already accelerating progress in many industries including climate modelling, agriculture and healthcare[1].

At the same time, its expansion is driving demand for energy-intensive data centres[2], increasing pressure on electricity systems, emissions and water use. It is also raising issues around labour displacement, bias and misinformation, data privacy and market concentration.

This tension is the crux of the ethical AI debate.

For investors, the challenge is no longer whether to invest in AI but whether it can be done in a way that aligns with responsible investment principles.

AI and sustainability: Two sides of the same coin

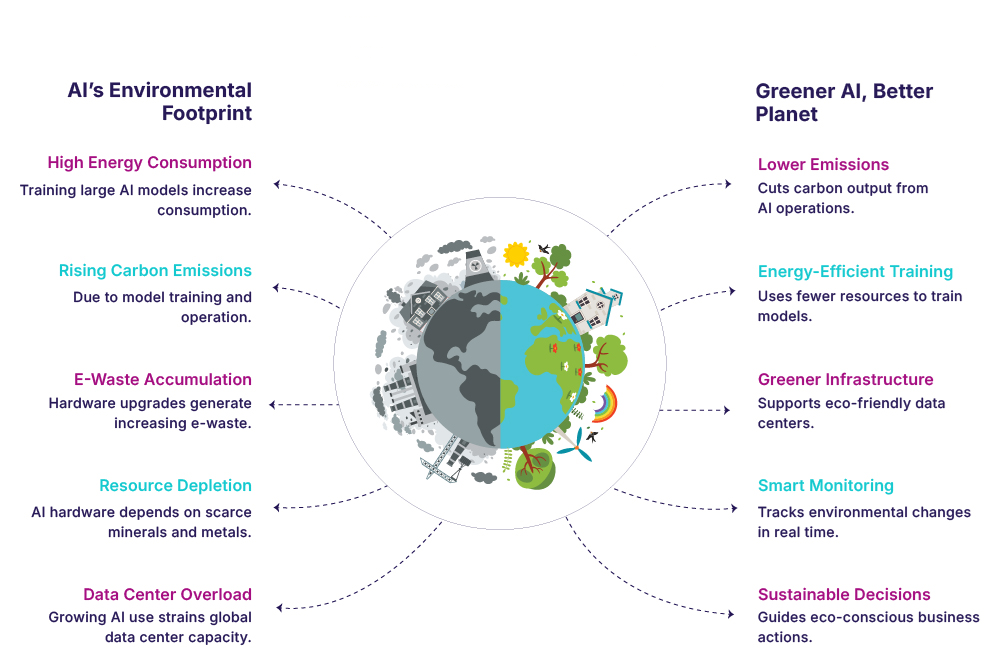

Much of the debate around AI and sustainability has centred on its environmental footprint.

Training and operating large models require significant computational power. The data centres supporting this infrastructure consume vast amounts of electricity and depend on advanced cooling systems. The IEA (International Energy Agency) expects demand from AI and data centres to quadruple by 2030[3].

While these concerns are well-founded, they capture only one side of the equation. AI is emerging as a tool to improve efficiency across high-emissions sectors[4]. It is being used to optimise electricity grids and integrate renewables, enhance industrial processes and reduce energy intensity and improve building energy management. AI is both a consumer of energy and a potential enabler of decarbonisation. AI’s impact will depend on how it is deployed and the nature of the energy systems supporting it[5].

Source: https://www.binarysemantics.com/blogs/sustainable-ai-the-role-of-green-ai-in-climate-action/

For investors, AI is not a single, homogenous theme but a layered ecosystem. Its environmental and social implications vary significantly depending on where a company operates within that ecosystem.

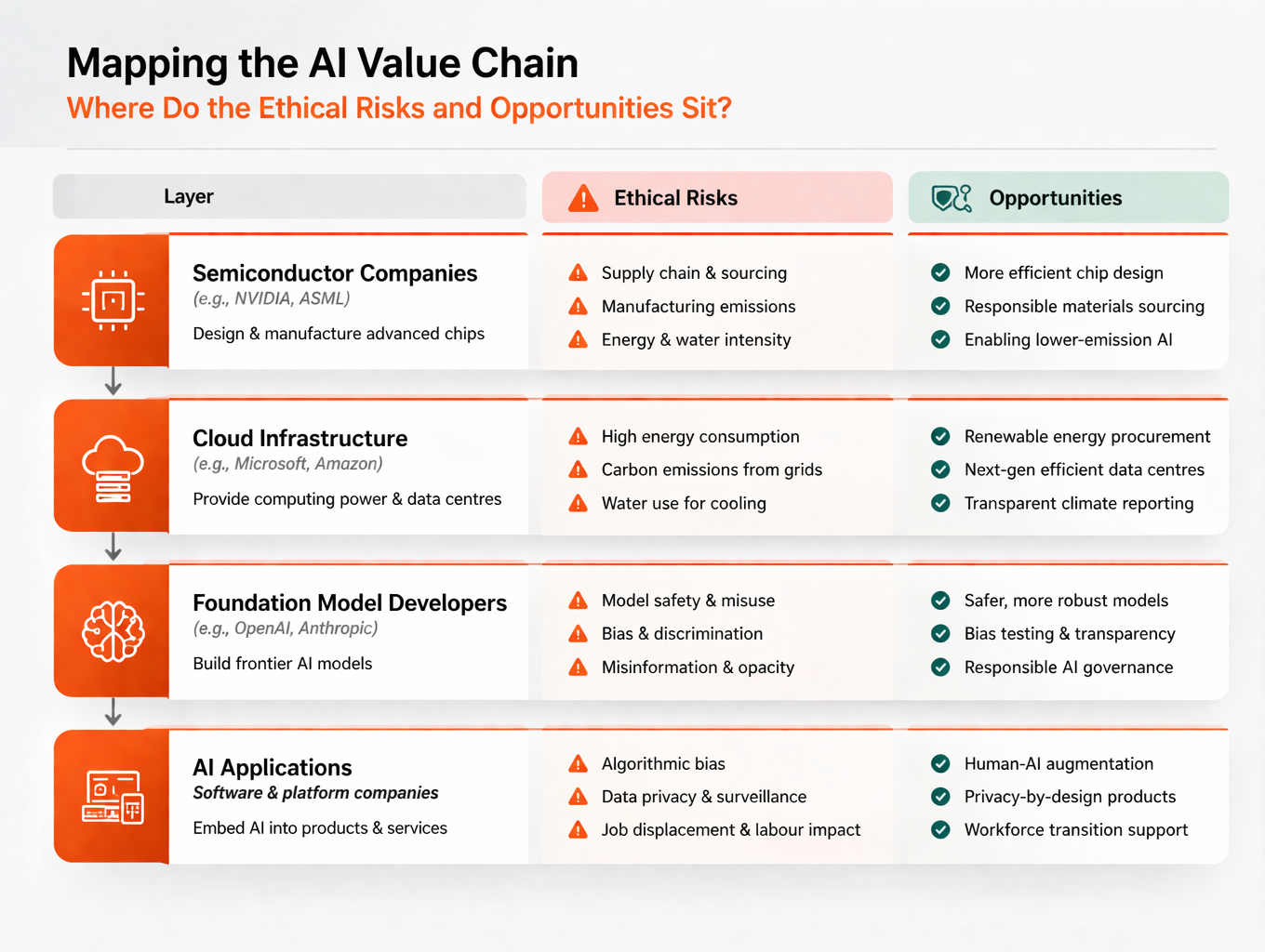

Mapping the AI value chain: Where do the ethical risks and opportunities sit?

In our view, the environmental risks of AI are concentrated primarily in the infrastructure layers, while social and governance risks sit closer to the end user. We believe that a layered structure provides a more nuanced way to approach the AI theme. Rather than treating AI as a binary decision, this allows investors to differentiate between parts of the ecosystem based on their risk profile and potential for positive impact.

Source: Betashares

Semiconductor companies such as Nvidia (NASDAQ: NVDA) and ASML (NASDAQ: ASML) form the backbone of this ecosystem. Their key ESG considerations centre on supply chain resilience as well as the energy and water intensity of manufacturing. These companies may be one step removed from how AI is deployed but they are not fully insulated from its downstream impacts. The use of their products contributes to the overall energy demand of AI systems and may be reflected in Scope 3 emissions, even if these impacts are not directly controlled and are harder to measure.

The layer following semiconductors is the cloud infrastructure layer, where hyperscale providers deliver the computing power required to scale AI. This is where the environmental footprint is most concentrated[6]. As adoption accelerates, this layer is likely to drive incremental demand and offer the greatest opportunity for efficiency gains and renewable energy integration.

At the model layer, developers such as OpenAI and Anthropic sit at the centre of governance and societal risks such as safety, misuse, bias, misinformation and transparency. Software and platform companies that embed AI into products and services used by businesses and consumers sit at the application layer. This is where social impact and the real-world benefits of AI become most visible.

Playing AI through an ethical lens: Choosing the right parts of the value chain

So how does this translate into portfolio construction?

In our view, playing AI ethically is less about avoidance and more about directing exposure toward parts of the value chain where risks are more manageable and outcomes better align with sustainability objectives.

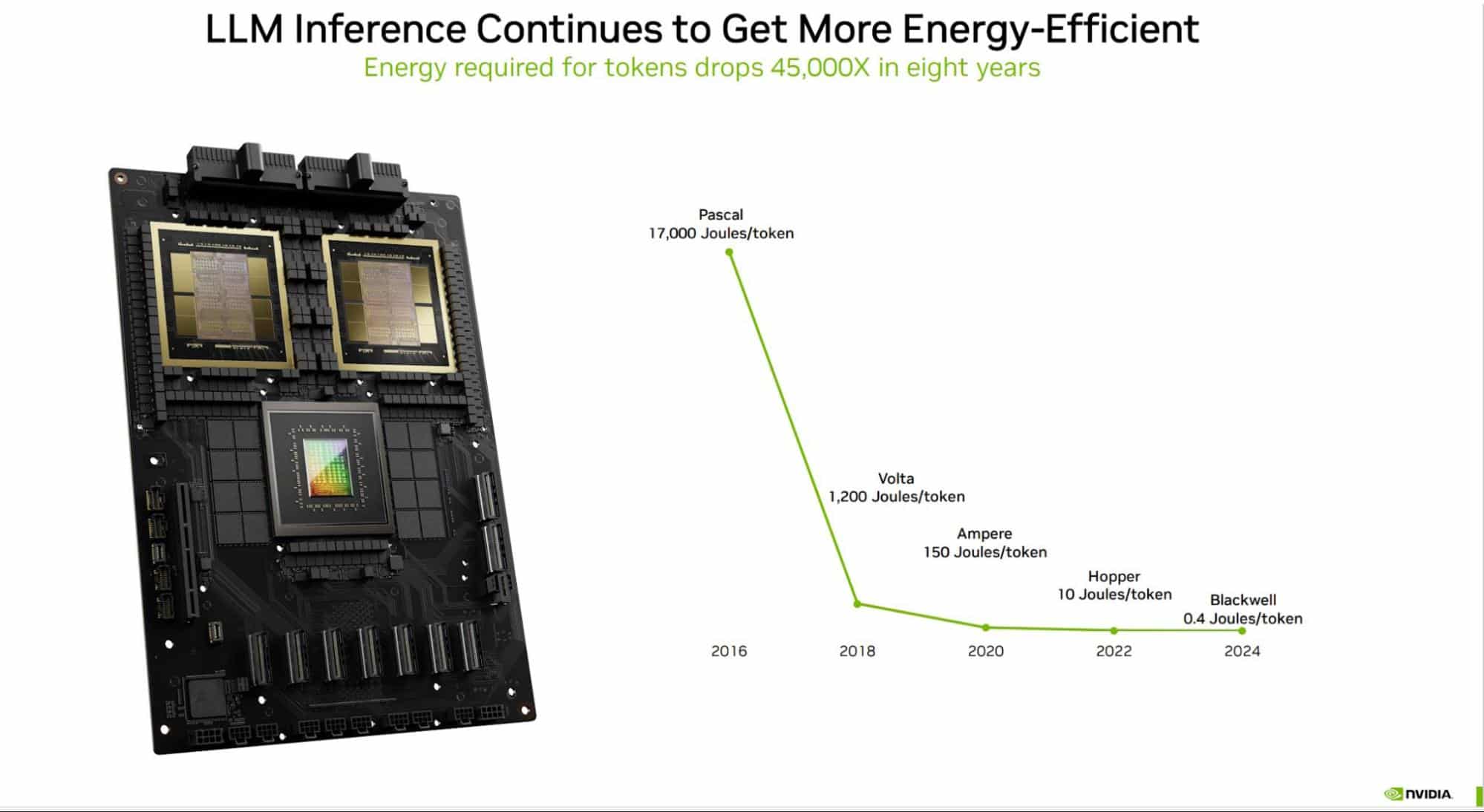

This often begins with the infrastructure layer. Companies such as Nvidia are at the core of the ecosystem, providing the computational power for AI. While AI growth has increased overall energy demand, successive GPU generations have improved performance per watt, reducing overall energy use.

Source: NVIDIA

Beyond chip design, Nvidia is also advancing system-level efficiency through software optimisation and accelerated computing, alongside broader sustainability initiatives across its operations and supply chain[7].

Further upstream, ASML plays a critical role in enabling advanced semiconductor manufacturing. While chip fabrication is energy and water intensive, ASML is increasing material circularity and working with customers to reduce energy use per wafer. It is also setting emission reduction targets and system-level improvements that can scale across the supply chain[8].

At the application layer, Salesforce and Adobe are embedding AI into enterprise and creative platforms with a strong emphasis on governance. Salesforce has established an Office of Ethical and Humane Use of Technology and introduced its Einstein Trust Layer to support data privacy, transparency and safe use of generative AI[9],[10]. Adobe has integrated responsible AI principles across its product suite, with a focus on content authenticity, attribution[11].

The common thread across these examples is not the absence of risk, but that these companies operate in areas where risks are more measurable and are open to engagement.

For investors, this reinforces the importance of viewing AI through a value chain lens. Rather than taking a broad approach, investors can assess where risks and opportunities sit across the ecosystem, allocate capital to areas they are most comfortable with, and engage with companies to better understand how AI is being developed, governed and deployed in practice.

Responsible AI and engagement: The ethical investor’s edge

While selecting the right companies is an important part of playing the AI theme responsibly, it is only part of the equation. Investors are increasingly thinking about where to invest and how companies are developing and deploying AI responsibly.

The CSIRO’s Responsible AI framework provides a practical foundation for investor engagement on AI governance and responsible use in Australia. It outlines how organisations can identify and manage risks such as fairness, accountability, transparency, privacy and safety, encouraging governance, impact assessments and controls across the AI lifecycle[12].

A framework such as this enables more structured engagement. Rather than broad discussions, investors can focus on governance, oversight and risk management, including how companies address bias, misinformation and misuse, and how real-world impacts are assessed and monitored.

In practice, engagement is typically undertaken by fund managers on behalf of investors. However, investors can themselves play a role in setting expectations, selecting managers with strong stewardship capabilities and shaping how these issues are prioritised.

As AI continues to evolve, this shared responsibility and ongoing dialogue between investors, asset managers and companies will be critical.

Is there an ethical way to play AI?

AI is at the intersection of the most powerful forces shaping markets today, from energy systems and labour dynamics to data, geopolitics and technological concentration. As a result, it does not lend itself to simple labels of “ethical” or “unethical” in the way traditional sectors might.

For investors, the implication is clear. As AI continues to evolve, ongoing dialogue between investors and companies will play a central role.

Outcomes will depend not just on exposure, but on how that exposure is understood and managed over time. Looking through the value chain, assessing environmental and societal impacts, and engaging on responsible AI practices, will be critical to navigating both the risks and opportunities.

In that sense, the ethical way to play AI is not a fixed answer, but a moving target. It requires a process that evolves alongside the technology itself, adapting to new risks, new opportunities and new expectations. And in a theme evolving as quickly as this, that process may matter more than the starting point.

NVIDIA Corporation, ASML Holding, Salesforce, Inc. and Adobe Inc. are held by the Betashares Global Sustainability Leaders ETF (ASX: ETHI) as at 31 March 2026. ETHI provides a ‘true-to-label’ ethically screened exposure to global companies. It includes a portfolio of large global companies identified as ‘Climate Leaders’ that have also passed screens to exclude companies with direct or significant exposure to fossil fuels or engaged in activities deemed inconsistent with responsible investment considerations. Investors should undertake their own research to understand the AI value chain and consider which parts they are comfortable allocating to, given the range of environmental, social and governance risks and opportunities.

No assurance is given that any of the companies in ETHI’s portfolio will be profitable investments.

There are risks associated with an investment in ETHI, including market risk, international investment risk, non-traditional index methodology risk and foreign exchange risk. Investment value can go up and down. An investment in the Fund should only be made after considering your circumstances, including your tolerance for risk. For more information on risks and other features of the Fund, please see the Product Disclosure Statement and Target Market Determination, both available on this website.

- https://www.oecd.org/en/publications/2024/05/oecd-digital-economy-outlook-2024-volume-1_d30a04c9/full-report/component-5.html? ↑

- https://www.morganstanley.com/ideas/ai-energy-demand-infrastructure ↑

- https://www.theguardian.com/technology/2025/apr/10/energy-demands-from-ai-datacentres-to-quadruple-by-2030-says-report ↑

- https://blog.se.com/industry/2024/11/29/what-is-predictive-ai/ ↑

- https://www.reuters.com/sustainability/climate-energy/how-ai-is-powering-energy-transition-smart-grids-fusion–ecmii-2026-02-02/ ↑

- https://news.mit.edu/2025/explained-generative-ai-environmental-impact-0117 ↑

- https://images.nvidia.com/aem-dam/Solutions/documents/NVIDIA-Sustainability-Report-Fiscal-Year-2025.pdf ↑

- https://www.asml.com/en/investors/annual-report ↑

- https://www.salesforce.com/company/responsible-ai-and-technology/ ↑

- https://www.salesforce.com/products/einstein/trust-layer/ ↑

- https://www.adobe.com/ai/responsible-ai.html ↑

- https://www.csiro.au/en/research/technology-space/ai/responsible-ai/rai-esg-framework-for-investors? ↑